[ad_1]

Introduction – Artificial intelligence brings new ethical challenges and the need for professional registration

As the use of artificial intelligence (AI) rapidly develops and demand continues to grow, the ethical use of these systems faces new and serious challenges.

These problems are currently most evident in emerging generative artificial intelligence platforms (used to create content such as text and images). They include misinformation, intellectual property rights and plagiarism, bias in training data, and the ability to influence and manipulate public opinion (such as during elections).

The Post Office Horizon IT scandal highlights the critical importance of independent professional and ethical standards in the application, development and deployment of technology.

It is right that the UK is committed to taking a leadership role in developing safe, trustworthy, responsible and sustainable artificial intelligence, as demonstrated by the November 2023 Summit and the associated Bletchley Declaration [1].

We believe these goals can only be achieved if:

- As licensed professionals, practitioners of artificial intelligence and other high-risk technologies meet common standards of responsibility, ethics, and competence

- Non-technical CEOs, leadership teams, and boards of directors make decisions about AI resourcing and development in their organizations, share responsibility, and develop a deeper understanding of technical and ethical issues.

This article makes the following recommendations to support the ethical and safe use of artificial intelligence:

- Every technical staff member in a high-risk IT role, especially artificial intelligence, should be a registered professional who meets independent standards of ethical practice, responsibility and competency.

- Government, industry and professional bodies should work together to support and develop these standards to build public trust and create expectations of good practice.

- UK organizations should publish policies for customers and employees on the ethical use of artificial intelligence in any relevant systems, and these policies should also apply to non-technical expert leaders, including chief executives.

- Technology professionals should expect strong and supported reporting and escalation pathways when they believe they have been asked to behave unethically or deploy artificial intelligence in a way that harms colleagues, customers or society.

- The UK Government should commit to taking the lead and supporting UK organizations to develop world-leading ethical standards.

- Professional bodies such as the BCS should support this work by seeking and publishing regular research on the challenges faced by their members and advocating for the support and guidance they need and expect.

2023 BCS Ethics Survey – Key Findings

BCS ethics expert panel surveyed IT professionals in summer 2023 to help identify challenges faced by practitioners [2], ahead of the AI Security Summit. This article outlines the key findings and makes some recommendations for action to help address the challenges. This online survey will be sent to all UK BCS members in August 2023. A total of 1,304 people responded. Survey results indicate that AI ethics is a topic that BCS members view as a priority, that many have personally encountered it, and that it is problematic in many ways. There is a lack of consistency in how companies handle ethical issues in technology, with many organizations reportedly offering no support to employees. A summary of the findings highlights:

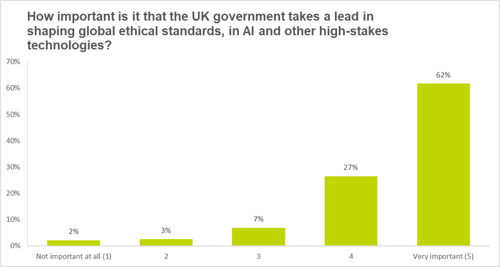

- 88% of participants believe it is important for the UK government to take a leadership role in setting global ethical standards for artificial intelligence and other high-risk technologies.

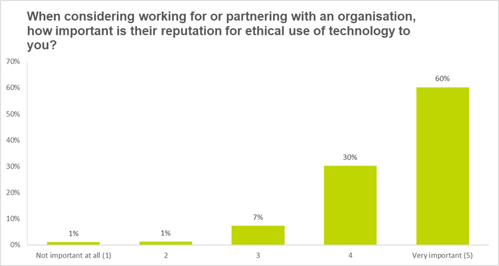

- When considering working for or with an organization, 90% say their reputation for ethical use of artificial intelligence and other emerging technologies is important.

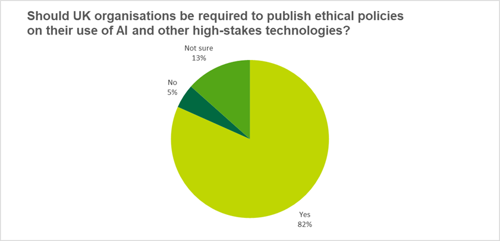

- 82% believe UK organizations should be required to publish ethics policies around the use of artificial intelligence and other high-risk technologies.

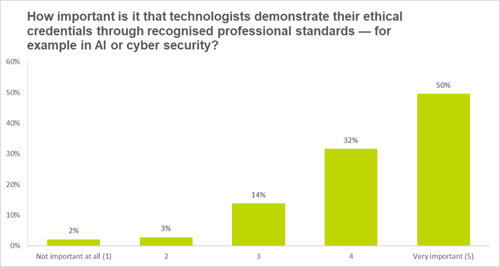

- When technicians demonstrate their ethical credentials through recognized professional standards, 81% of respondents believe it is important.

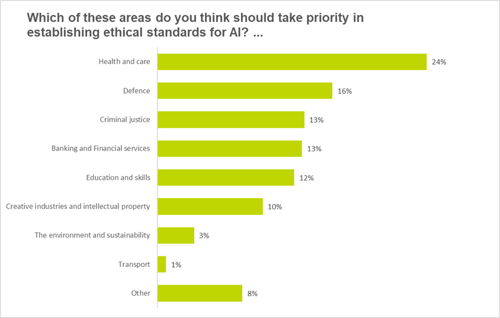

- The largest number of respondents (24%) said health and care should be prioritized when developing ethical standards for artificial intelligence.

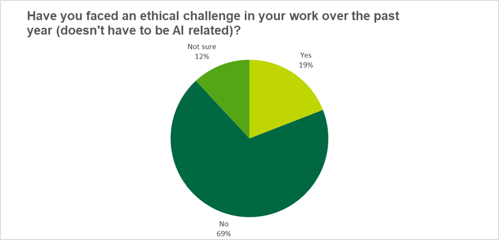

- 19% of respondents faced ethical challenges at work in the past year.

- Employer support for these issues varied, with many respondents stating that despite examples of good practice they had little or no support in dealing with ethical issues related to technology.

Result analysis:

Priority areas for establishing ethical standards for artificial intelligence

Nearly a quarter see health and care as a key area, which is not surprising given the clear impact AI could have in surgeries, diagnoses or patient interactions. However, respondents said other areas such as defense, criminal justice, and banking and finance could lead to harmful consequences of the use of AI and require caution.

Ethical Issues in Professional IT Practice

When asked if technologists had faced ethical challenges on the job in the past year, 69% of respondents answered “no.” This suggests that approximately one-third of BCS members face ethical challenges each year, meaning that dealing with ethical challenges throughout their careers is almost inevitable, supporting the long-standing emphasis on incorporating ethics into BCS certification training. Interestingly, 12% of respondents were unsure whether they faced ethical challenges, suggesting either a lack of clarity on ethical concepts or a focus on rapid change that precludes simple categorization of ethical issues.

Organizational reputation for ethical use of artificial intelligence and other high-risk technologies

Awareness of the importance of ethics and awareness of the potential harm caused by technology explains why respondents are strongly inclined to work with organizations that have a strong reputation for ethical use of technology. 90% of BCS members surveyed thought this was either very important or very important. This creates challenges for organizations regarding appropriate mechanisms that enable them to demonstrate and demonstrate their commitment to ethical work, one of which is the publication of an ethics policy.

Require the publication of policies on the ethical use of artificial intelligence and other high-risk technologies

Respondents hold high standards for the ethical reputation of the organizations they work with, as evidenced by strong support for policies that require organizations to publish their ethical use of technology, including artificial intelligence.

More than 80% of respondents supported this, with only 5% firmly opposed. This position is important because it not only supports the development of ethics policies and their application, but also requires the publication of these policies.

The importance of government leadership for artificial intelligence and technology ethics

The previous question already indicated that respondents believe government has a key role to play in ensuring the ethical use of critical technologies such as artificial intelligence. To further examine the topic of government in policy and standards, we asked the question: “How important is it that the UK government takes the lead in setting global ethical standards in artificial intelligence and other high-risk technologies?”.

88% of respondents believe it is important for the UK government to take a leadership role in setting global ethical standards for artificial intelligence and other emerging technologies. This also shows the need for political parties to prepare manifestos before the 2024 general election to clarify their stance on the ethical use of technology. This also applies to the devolved administrations and related policies within their areas of competence. Initiatives such as the Center for Data Ethics and Innovation (CDEI) are a great way to develop these policies; the term of the CDEI Ethics Advisory Board ends in September 2023; the Center will continue to seek expert input and advice in an agile manner to enable us to respond to ongoing Opportunities and challenges in the changing field of artificial intelligence. [3]. In November, the British government announced the establishment of the Artificial Intelligence Security Research Institute [4].

Demonstrating ethical credentials ‘very important’

Respondents clearly see the responsibilities they face as IT professionals. This can be seen in their strong support for obtaining ethical credentials, for example through ethical standards in areas such as artificial intelligence or cybersecurity. Only 5% of respondents think these are not important, and 81% think they are important or very important.

Support IT professionals on the ethical issues of artificial intelligence and other high-risk technologies

In the final question, we asked “How does your organization support you in raising and managing [ethical] question? ”.

Of those who responded, 41% said they had received no support and 35% had received “informal support” (e.g. speaking to line managers/colleagues).

The survey shows examples of good practice, such as those expressed in the following answers:

“My employer listened to my potential ethical concerns. I received support in discussing potential issues with the client. Our client and my employer agreed to implement controls to ensure potential ethical situations were appropriately managed.”

This shows that some organizations follow good practice, engage in ethical discussions and have clear policies and procedures for employees.

[ad_2]

Source link